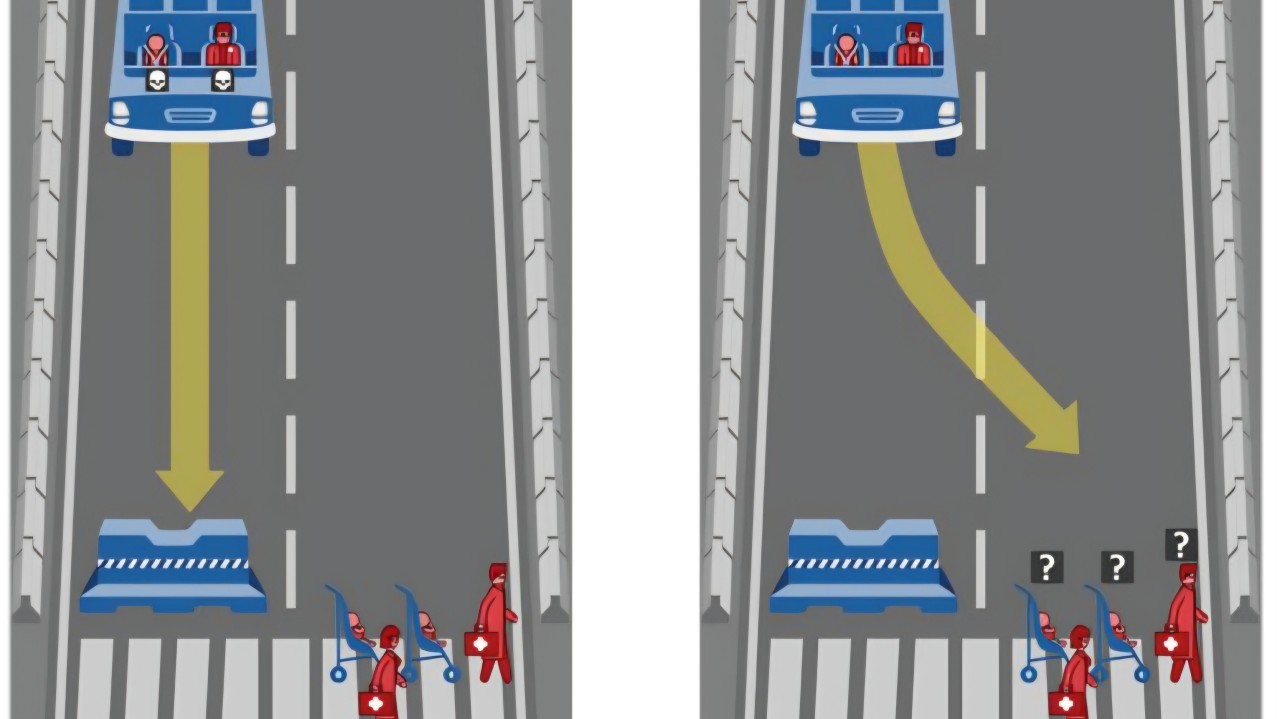

Researchers at MIT ran a global experiment known as the Moral Machine. They asked over 2 million people across 233 countries a question: if a self driving car had to choose between saving five pedestrians or one passenger, what should it do?

The collective response was not simple. People in different parts of the world made different choices. Some prioritised the young over the old. Others, law-abiding citizens over jaywalkers. Culture, religion, and personal beliefs shaped each person’s decision. If the humans training AI systems can’t agree on what’s ethical, how do we expect the machines to?

Most organisations aren’t run by a Bond villain, sitting in a wing backed chair stroking a white Persian cat while plotting their next move. They’re made up of people making fast, often well-intentioned choices under pressure. That’s the real challenge with ethics. It isn’t about good versus bad. It’s the end result of thousands of small decisions made every day, each one shaped by context, values and trade-offs.

Let’s consider how this plays out when we implement AI. Take the well known case of Stanford Medicine’s vaccine rollout during the pandemic. They used an algorithm to prioritise vaccine distribution. It weighted age and seniority to protect those at higher medical risk. What AI missed was that junior doctors spent the most time with patients while senior doctors had less patient face time. The AI optimised for one definition of fairness but it was the wrong one.

The same pattern appeared when the UK introduced an algorithm to assign exam grades during lockdown. It combined schools’ past results with teachers’ rankings to predict outcomes. Nearly 40% of students received lower grades than expected. Students from lower-performing schools were marked down, even when they’d ranked top of their class. The aim was consistency but the outcome was inequality. The AI was focused on preserving the past and not rewarding individual potential.

Another example in online advertising. A company found its pricing algorithm was systematically offering lower prices to higher income users online. Why? The higher income group had historically responded better to campaigns. The model was optimising well for engagement and driving sales, but discriminating against those on lower incomes.

We’ve also seen how generative AI can turn ethics into headlines overnight. During India’s 2024 election, deepfakes were used to resurrect deceased politicians and make candidates speak fluently in dozens of regional languages. What began as a tool for outreach became customised persuasive messages to micro-targeted audiences aimed at shifting the outcome of the election. With innovation happening at a pace faster than regulation, these ethical decisions fall to leaders on how to use the technology.

These situations are not the result of bad intent. They are all the result of human assumptions and values. That’s what makes AI ethics so complex. It’s not about whether we can build the technology, but how we decide what doing the right thing looks like when every outcome has consequences. We know ethical decisions rarely come in right and wrong. They sit in the grey space between efficiency and fairness, profit and privacy, convenience and consent. And the more we automate, the more these grey spaces multiply.

AI ethics isn’t solely a technology or data science responsibility, it’s a leadership one. Ethics is determined by who is in the room, what they value, and how they choose to define success. This is why diverse teams are critical, they see risks that others will miss.

Every organisation needs to be explicit about what it stands for and what fairness means in its context, whose interests it protects, and which outcomes it will not accept even if they’re profitable. Leaders must understand their organisations view and use their own moral compass when it comes to using AI to make decisions.

We all know data can be made to tell multiple stories. The same data set can produce entirely different outcomes depending on the assumptions behind it. Leaders should start with visibility, know what data your models are trained on and where bias might already live. Ensure you are testing the outcomes, not just the logic and keep humans in the loop to challenge decisions.

Leaders should also create clear ethical escalation paths. So an engineer, data analyst or product manager has somewhere to go when something doesn’t feel right. If ethics is everyone’s job but no one’s responsibility, it quickly gets lost in the noise. Another useful action is to add items to pre-launch checklists that makes teams pause and ask: Who could this harm? Who might it exclude? What’s the unintended consequence?

Beyond these foundations, the organisations leading the way are building formal AI ethics boards and cross-functional review councils. These groups include data scientists, legal, product, HR, marketing and customer experience. Their role isn’t to slow progress but to ensure alignment between technology decisions and organisational values before products reach the market. They are also educating the entire organisation. Every function that touches data, from HR to Marketing to Product, contributes to how ethical or biased your systems become. If your people don’t understand the implications of the data, you lose control of the outcomes it generates.

We are now seeing the regulators starting to catch up. The EU AI Act and the UK’s emerging AI frameworks are all steps towards accountability. But compliance will always be the minimium and ethical leadership has to go further.

The companies that treat ethics as part of innovation are the ones that will build trust. And trust is what determines whether people choose to use your product, believe in your brand, or work for your organisation.

Someone once said to me, “The geeks aren’t going to inherit the earth, but our algorithms might.” This has stuck with me and captures a rather uncomfortable truth. The systems we build will outlive us, their creators, and the ethics of our decisions today will shape the world the next generation inherits. If humans can’t agree on ethics, how can machines?

Test your ethics:

http://moralmachine.mit.edu/

https://www.survivalofthebestfit.com/game/

Source: https://www.linkedin.com/pulse/humans-cant-agree-ethics-how-can-machines-ai-series-nadine-thomson-5prre/